Quantum computing attracts a certain kind of bad writing.

Everything becomes a revolution before the machine is reliable. Every qubit milestone becomes a turning point. Every roadmap sounds like a short bridge between fragile experiments and civilization-changing compute.

That is the wrong frame for what matters now.

The real threshold in quantum computing is fault tolerance. Not because the phrase sounds technical, but because it marks the point where the field stops being mostly about impressive but delicate demonstrations and starts becoming a discipline of reliable logical computation.

That shift is not just a physics story.

It is a manufacturing story. A decoding story. A cryogenics story. A packaging story. A systems-integration story.

IBM’s Starling roadmap matters for one reason above all: it makes the challenge concrete enough to judge. That is more useful than generic quantum ambition.

Fault tolerance is where quantum stops being demo theater

The core problem is simple to describe even if it is hard to solve.

Physical qubits are noisy. They decohere, drift, and accumulate errors faster than useful large-scale computation can tolerate. So the field’s real objective is not merely to create more physical qubits. It is to combine enough of them, with enough control and error correction, to produce logical qubits that behave reliably enough to support meaningful computation.

That is the difference between a machine that can impress researchers and a machine that can sustain work.

This is why fault tolerance is the actual gate. Until it becomes operational, quantum remains trapped in a regime where every gain has to be hedged by fragility.

What IBM Starling is really claiming

IBM’s Starling program is important less because it promises a distant machine and more because it lays out a stack of interdependent requirements that can be inspected, doubted, and tracked.

The headline promise centers on logical qubits, error-corrected operations, and a roadmap toward a system that can perform far more reliable computation than today’s noisy devices. That matters because it moves the conversation from vague “quantum advantage someday” language toward a more disciplined question: can one company integrate all the ingredients needed for stable logical performance?

That is a better standard.

A public roadmap does not prove success. But it does create something the field badly needs: auditable ambition.

The deeper value of Starling is that it forces observers to look at the whole machine, not just the prettiest piece of it.

Logical qubits are an industrial problem

This is the part quantum coverage often hides under technical glamour.

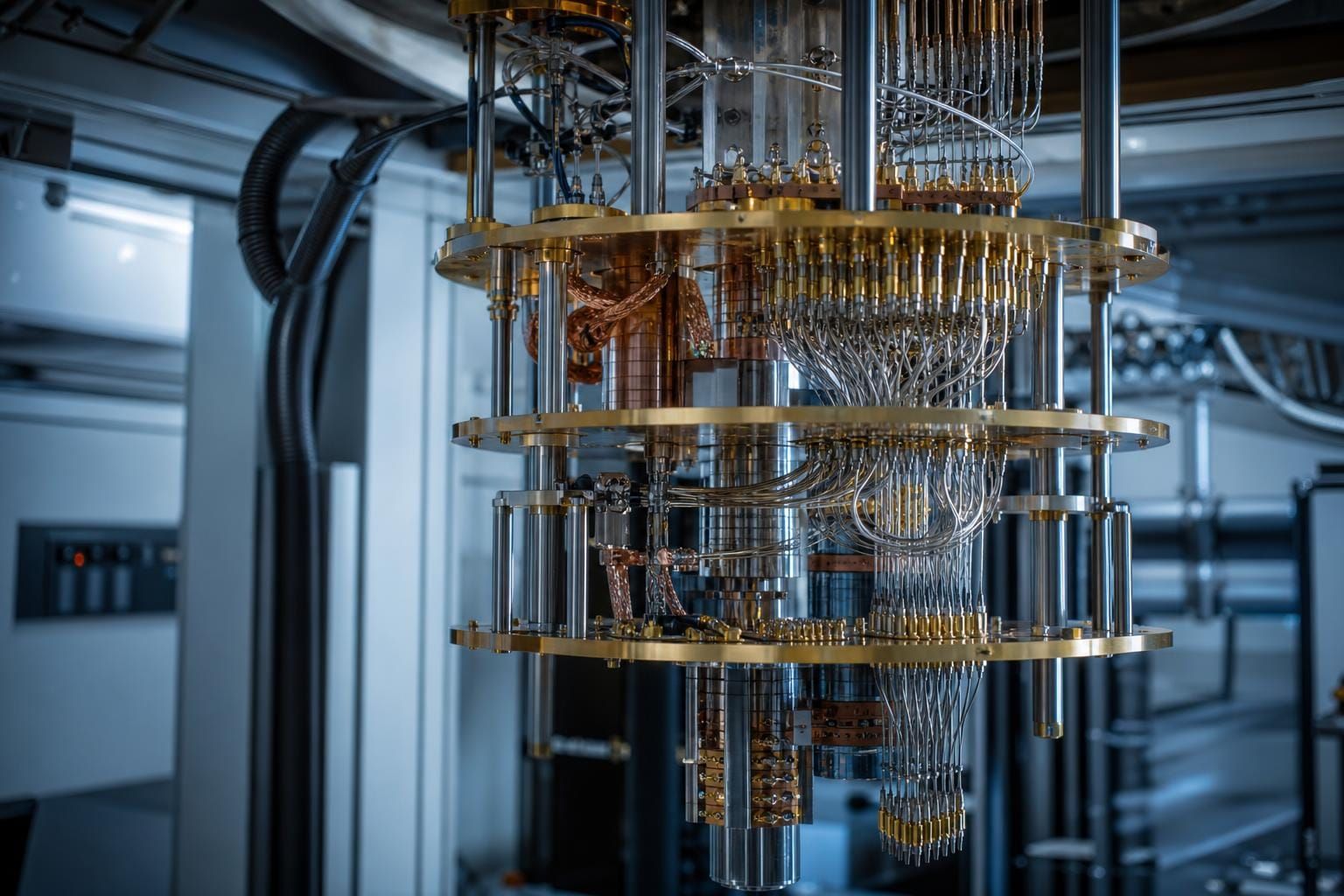

A logical qubit is not just a better qubit. It is a system achievement built on overhead, coordination, and discipline. To make logical computation work, you need many physical qubits, carefully arranged error-correction schemes, stable control electronics, fast decoding, acceptable cycle times, and hardware packaging that does not collapse under its own complexity.

That means progress depends on more than elegant theory.

It depends on whether fabrication yield is high enough, whether modules can be connected without intolerable crosstalk, whether the refrigeration and cabling problem can be kept under control, and whether the decoding hardware can keep pace with the rate at which the machine generates syndrome data.

In other words: quantum computing becomes real at the point where industrial engineering catches up to scientific insight.

That is why fault tolerance should be read as infrastructure, not mythology.

The real bottlenecks are not mysterious

The field already knows a lot about where the pain lives.

Decoding is one of the clearest examples. Error correction is only useful if the system can interpret and respond to error syndromes fast enough to keep the computation stable. If the decoder cannot keep up, the elegance of the code does not save you.

Then there is packaging and modularity. It is one thing to show a promising threshold result on a smaller system. It is another to connect larger modules, manage signal integrity, limit crosstalk, and preserve performance as complexity increases.

Cryogenics is another brutal constraint. Quantum roadmaps often sound weightless until you remember that all this has to live inside physical cooling, control, and wiring limits that do not care about investor decks.

And then there is yield. The more complex the architecture becomes, the less forgiving the manufacturing problem is. A roadmap can be theoretically clean and still die inside fabrication realities.

None of this is glamorous. That is exactly why it matters.

The competitive race is about integrated stacks, not slogans

Quantum competition still gets narrated as if one company will win because its qubit concept sounds the most futuristic.

That is too shallow.

Different players are pursuing different routes: superconducting systems, trapped ions, topological claims, alternative error-correction strategies, modular network designs. Those differences matter. But the deeper race is about integration.

Who can coordinate architecture, control, decoding, packaging, and scale into one system that keeps working as it grows?

That is the real contest.

A breakthrough in one layer is not enough if the rest of the stack cannot carry it. A beautiful threshold paper does not automatically become a usable machine. A dramatic hardware claim does not erase the burden of system coherence.

This is why public claims should now be judged less like scientific prophecy and more like industrial execution plans.

For a broader look at fault-tolerance pathways under different architectures, see Scalability vs. Precision: How Willow and Oxford Are Shaping the Future of Fault-Tolerant Quantum Computing.

Why This Matters

Fault-tolerant quantum computing matters because it is the point where quantum stops being mostly a research performance and starts becoming a strategic compute capability. But that transition will not be won by abstract promise alone. It will be won by whoever can industrialize reliability across the whole stack: error correction, hardware control, cryogenics, packaging, and modular scale. The field’s real test now is not whether quantum sounds powerful. It is whether anyone can make stability manufacturable.

Conclusion

IBM Starling is useful because it gives the field something harder than excitement.

It gives it a test.

Not a test of whether quantum computing is theoretically profound. That part is settled enough to stop pretending otherwise.

A test of whether reliable logical computation can be built with industrial discipline instead of rhetorical momentum.

That is the line that matters now.

If fault tolerance becomes operational, the quantum story changes fast.

If it does not, then even the best demos remain trapped on the wrong side of usefulness.

So the right way to read the next few years is not as a contest of headlines.

It is as a contest to make reliability real.

CTA: Read next: Scalability vs. Precision: How Willow and Oxford Are Shaping the Future of Fault-Tolerant Quantum Computing and Quantum Drug Discovery Gets Real: A 20× Wake-Up Call for Longevity