AI is no longer just eating software. It is eating electricity.

That shift matters more than a lot of AI coverage still admits. The most important question is no longer only which lab has the best model, the best benchmark, or the most aggressive product roadmap. It is who can actually power the compute they keep promising.

That is why data center energy has moved from background infrastructure into strategic territory. Training runs keep growing, inference demand keeps compounding, and the physical cost of supporting AI at scale is starting to shape where the industry can expand at all.

Once that happens, electricity stops being a utility detail. It becomes a bottleneck.

Three power options now sit at the center of that bottleneck conversation: fusion, enhanced geothermal, and small modular reactors, or SMRs. Each promises firm, low-carbon power. Each carries very different timelines, risks, and practical constraints. And each tells us something important about what the AI buildout is becoming.

This is no longer mainly a story about cleaner energy branding. It is a story about how the AI economy will secure reliable baseload power without running headfirst into climate, grid, and permitting limits.

Why AI power became a real strategic problem

For years, the default assumption was that better chips and bigger clusters would simply find power somewhere.

That assumption looks weaker every month.

The International Energy Agency now projects that global electricity demand from data centers could rise sharply by 2030, with AI as a major driver of that increase. The exact national and regional outcomes will differ, but the pattern is clear: data centers are becoming a more visible piece of the grid, not an invisible backend.

That changes the procurement logic.

If you are building or financing AI infrastructure, the target is no longer just cheap electricity on paper. The target is reliable 24/7 power, preferably low-carbon, available at the right scale, in the right place, on a timeline that matches data center deployment.

That is a much harder requirement set than “buy more renewable credits.”

It also helps explain why this conversation is starting to resemble industrial policy. Once AI depends on hard-to-secure electricity rather than just cloud abstraction, questions about interconnection queues, local grid stress, permitting, community tradeoffs, and physical siting stop being side issues.

They become central constraints on compute growth.

Fusion: the high-upside wildcard

Fusion remains the most emotionally powerful option in the mix.

The appeal is obvious. If practical fusion arrives, it offers the possibility of compact, dispatchable, carbon-free electricity with extraordinary symbolic and strategic value. That is why every credible sign of commercial movement gets outsized attention.

Helion’s agreement to supply Microsoft has become the clearest example of that shift in public imagination. It matters not because fusion is already bankable at hyperscale, but because the relationship moved the conversation from abstract promise toward customer-linked delivery pressure.

That is a real threshold change.

Still, the sober reading matters more than the spectacle reading.

Fusion is not yet the near-term answer to AI’s energy crunch. It remains a first-of-a-kind engineering and commercialization bet with unanswered questions around consistent output, operational reliability, economics, regulatory treatment, and timeline realism. Even optimistic projects still need to prove that they can deliver power on contract, at useful capacity factors, with commercially credible cost structures.

So fusion belongs in the conversation for one reason above all: if it works, it changes the long-term shape of energy abundance. But right now, it is still a future option with unusually high upside and unusually high execution risk.

Enhanced geothermal: the most practical near-term fit

If fusion is the wildcard, enhanced geothermal looks like the most operationally legible option.

That is because its value proposition lines up unusually well with the actual needs of AI campuses. Enhanced geothermal systems aim to deliver firm power without depending on weather, and they can be developed in a modular, drill-by-drill way that feels much more like industrial deployment than moonshot energy theater.

That matters.

Geothermal is easier to take seriously in this context because it solves for the real siting problem. AI data centers do not just need low-carbon electricity in the abstract. They need dependable electricity close enough to load, or at least close enough to useful transmission, to avoid years of delay and grid friction.

That is why geothermal developers increasingly talk in terms of data center corridors, co-location logic, and behind-the-meter potential. The pitch is not just “clean power.” The pitch is “firm power that can be matched to digital infrastructure with less drama than a lot of other energy pathways.”

That does not make geothermal frictionless.

Enhanced geothermal still faces subsurface uncertainty, drilling complexity, cost discipline questions, and environmental scrutiny around seismicity and water systems. But among the major low-carbon baseload candidates, it increasingly looks like the one that best fits AI’s demand for faster deployment and localized reliability.

In other words: geothermal is not the flashiest story. It may be the most productizable one.

SMRs: the mature nuclear logic with slower clocks

Small modular reactors occupy a different strategic lane.

They are less speculative than fusion and often easier for institutions to model than enhanced geothermal, especially if those institutions already understand nuclear procurement, licensing, and long-lived infrastructure assets.

The basic case for SMRs is straightforward. They promise firm, carbon-free power with the reliability profile that large industrial operators already value. For multi-hundred-megawatt or gigawatt-class AI campuses, that kind of power density is extremely attractive.

They also benefit from something fusion still lacks: a clearer regulatory lineage.

Projects such as Ontario’s BWRX-300 effort matter because they show that SMRs are moving through real construction and licensing pathways rather than living entirely inside pitch decks. That matters to utilities, governments, and long-horizon infrastructure investors.

But AI has a timing problem, and that is where SMRs run into pressure.

Even if the technology is strategically compelling, the development cycle is still slow relative to the speed of AI demand growth. Cost overruns, schedule slippage, public acceptance battles, and licensing complexity all remain part of the equation. So SMRs may be highly relevant to the medium-term AI power stack without being the easiest answer to the immediate power crunch.

That makes them neither hype nor cure-all. They are the durable, institution-friendly option whose biggest weakness is time.

Who is most likely to win the race first?

The honest answer is that the AI power race probably does not produce one winner.

Near term, enhanced geothermal looks best positioned to solve real deployment problems quickly enough to matter. It aligns with the need for firm power, localized siting, and cleaner electricity without requiring a full civilizational leap in physics or permitting culture.

Medium term, SMRs may become the more durable answer for regions and operators willing to bear longer timelines in exchange for industrial-scale reliability.

Long term, fusion remains the category disruptor. But it only earns that title if projects move from symbolic milestones to repeatable commercial output.

That means AI is likely to pay for portfolios, not purity.

The practical future may look less like one triumphant energy technology and more like a layered strategy: geothermal where it can be developed fast, SMRs where institutions can support slower but larger commitments, grid upgrades where politics allows them, and storage or transitional backup systems filling the gaps.

That is less elegant than a single winner story. It is also much more believable.

The deeper issue is not energy alone. It is geography, governance, and access.

The most important thing about this race is not just which technology wins on engineering merit.

It is who gets to build first, where, and under what political conditions.

Firm clean power is not evenly distributed. Neither are permitting systems, transmission capacity, local public tolerance, water availability, or capital access. Once AI growth depends on these variables, compute starts behaving less like pure software scale and more like heavy industry.

That has consequences.

Regions that can pair energy infrastructure with data center growth may gain a real strategic edge. Regions that move too slowly on siting, interconnection, or power planning may lose AI investment even if they have strong talent or capital. And communities asked to host these facilities will increasingly push harder on the local tradeoffs: land, water, noise, reliability, and who actually benefits.

This is where the energy story turns into a governance story.

The future of AI infrastructure will not be decided only by chip performance or model architecture. It will also be decided by which societies can build enough clean, reliable, politically durable power to support the loads they keep inviting.

Why This Matters

AI data center power is becoming a test of whether digital ambition can survive physical reality. Fusion, geothermal, and SMRs are not just competing technologies. They represent different answers to the same question: how do you keep scaling compute without deepening grid stress, emissions, and local backlash? The winners will not simply be the companies with the strongest models. They will be the operators, regions, and governments that learn how to secure firm clean power fast enough to make AI growth physically credible.

Conclusion: AI is forcing a return to infrastructure thinking

The cleanest way to read this moment is also the least glamorous.

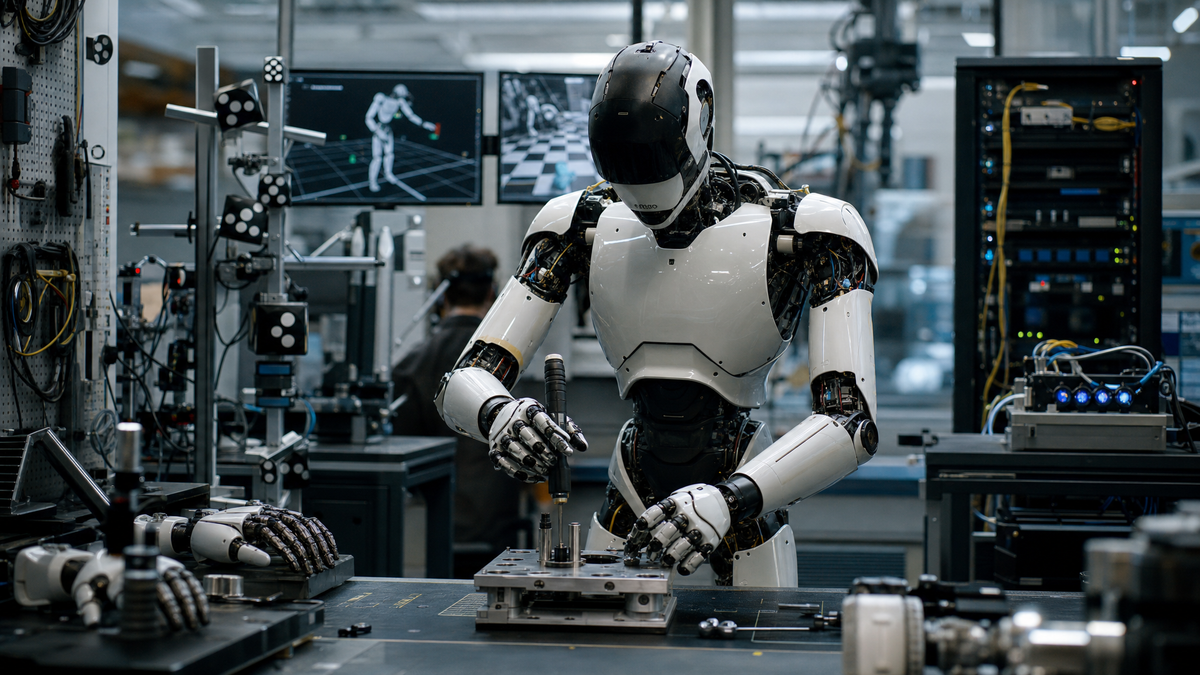

AI is pushing the tech industry back into the world of physical constraints.

That means power generation matters again. Grid planning matters again. Permitting matters again. Geography matters again. And the companies that pretend intelligence can scale independently of those systems are likely to hit harder limits than they expect.

Fusion may yet transform the long game. Geothermal may become the pragmatic bridge. SMRs may anchor the heavier institutional buildout. But the biggest shift is broader than any one technology.

AI has made electricity strategic.

And once that happens, the future of compute starts looking a lot less like software alone and a lot more like infrastructure politics.

CTA: Read next: AI Chip Sales: The Dataset That Exposes AI’s Power Grab