Humanoid robotics does not need more theatrical demos. It needs a stack that can actually make robots learn faster, adapt better, and survive messy real environments.

That is why Isaac GR00T matters.

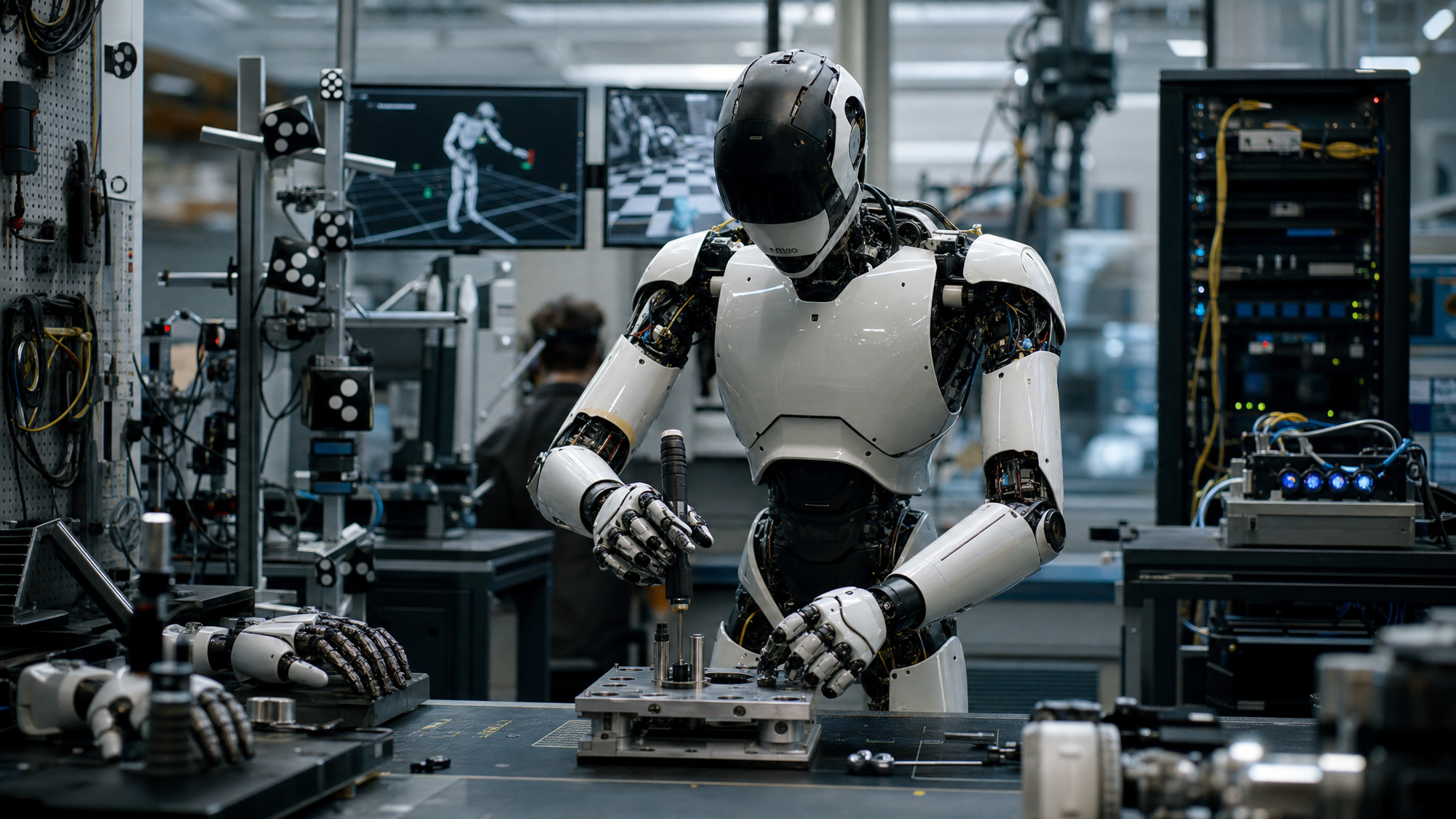

NVIDIA is not only pitching another robot model. It is building a full operating stack for humanoids: vision-language-action models, synthetic data generation, simulation tooling, physics infrastructure, and on-robot compute. The deeper story is not “look, the robot can grab something.” The deeper story is that NVIDIA wants to standardize how humanoid capabilities are trained, evaluated, and deployed.

That is a much bigger ambition than a flashy lab clip.

Why GR00T matters now

Humanoid robots are still stuck in a frustrating middle zone.

They are impressive enough to attract headlines, but not reliable enough to feel like infrastructure. The bottleneck is not only hardware dexterity. It is the whole learning loop: how you collect data, how you scale behavior, how you transfer from simulation to reality, and how you update capability without rebuilding everything from scratch.

That is the exact gap GR00T tries to close.

Instead of treating the robot as a one-off marvel, NVIDIA is treating humanoids like a platform problem. That means standardizing the layers underneath the behavior: models, synthetic data, simulation, physics, and edge inference.

If that stack works, humanoids become less like custom science projects and more like trainable systems.

What Isaac GR00T actually is

At the center is a Vision-Language-Action model architecture designed for humanoid control.

The public framing around GR00T N1 describes a dual-system approach: one layer handles perception and instruction-following at lower frequency, while another handles high-rate continuous motor output. The point is not just to make the model “smarter.” It is to separate slower reasoning-like interpretation from fast embodied action.

That matters because robot intelligence is not one thing. A humanoid has to parse instructions, understand objects and scenes, maintain task structure, and then produce stable physical behavior in real time.

NVIDIA’s bet is that this stack becomes more effective when paired with the rest of its infrastructure rather than treated as an isolated checkpoint.

The real advantage is the data flywheel

The most important part of the GR00T story may not be the model at all.

It may be the data engine.

Humanoid robotics has a brutal data problem. Real robot data is slow, expensive, and hard to collect at useful scale. That is why the synthetic pipeline matters so much. If a system can start from a small number of real demonstrations, generate large synthetic trajectory sets, and then use those to improve policy behavior, the economics of training change dramatically.

That is where NVIDIA’s broader stack becomes strategic.

Simulation tools, Omniverse-linked environments, GR00T-Dreams style synthetic generation, and related training workflows do not just make the demos prettier. They create a repeatable path from a little real data to a much larger behavior set.

That is how humanoid development stops being bottlenecked only by manual collection.

Why this is more than a robotics model release

Most AI readers will misread GR00T if they treat it like a pure model launch.

The more serious interpretation is that NVIDIA is trying to become the default systems layer for physical AI.

That includes:

- the model

- the simulation environment

- the synthetic data engine

- the physics layer

- the training infrastructure

- the edge compute layer

That is a familiar move if you have watched AI infrastructure elsewhere. The winning position is often not the single clever artifact. It is the stack other people end up building on.

In humanoids, that matters even more because the sim-to-real gap is so punishing. If one company can make the whole training and deployment loop more legible, more standardized, and faster to iterate, it gains influence far beyond one benchmark.

What is real, and what is still unresolved

The strong part of the story is clear.

GR00T appears to be pushing toward more practical language-conditioned control, stronger synthetic data leverage, and a more systematized training pipeline. Those are exactly the kinds of improvements humanoid robotics needs.

But the unresolved part matters just as much.

Humanoids still face the same structural problems:

- sim-to-real transfer remains fragile

- safety in physical human environments is still hard

- contact-rich manipulation is still difficult

- edge-case reliability matters more than highlight reels

- vendor-stack dependence can become a real strategic constraint

So the right read is not that GR00T solves humanoid robotics.

It is that NVIDIA is building one of the first genuinely plausible operating stacks for making humanoids less brittle over time.

Who is affected first

The immediate beneficiaries are robotics teams that want a shorter route from experimentation to deployment.

A more standardized stack can reduce reinvention. That matters for startups, research groups, industrial robotics programs, and anyone trying to build embodied systems without constructing every layer from scratch.

But the power concentration question follows immediately.

If the best route into humanoid capability ends up tightly coupled to one vendor’s simulation, training, data, and edge-compute ecosystem, then the field becomes easier to accelerate and harder to decentralize at the same time.

That is the tradeoff.

More progress. More operational coherence. Potentially more dependence.

Why This Matters

Isaac GR00T matters because it reframes humanoid robotics as a stack problem, not just a model problem. If NVIDIA can make simulation, synthetic data, control models, physics, and on-robot compute work together as one repeatable pipeline, humanoids become easier to train and harder to dismiss as demo theater. But that progress also concentrates leverage in the ecosystem controlling the stack. The real question is not only whether robots get better hands. It is who gets to define the infrastructure they learn from and operate inside.

Conclusion: the story is the stack

The easiest way to misunderstand GR00T is to focus on the robot hand and miss the training loop behind it.

NVIDIA is trying to do for humanoids what platform companies do everywhere else: make the surrounding system so useful that the model becomes only one layer of a larger dependency.

That is why GR00T matters.

Not because it proves humanoids are solved. But because it shows the next serious battle in robotics is not just about embodiment. It is about who owns the pipeline from simulation to behavior to deployment.

That is a much bigger story than one successful grasp.

CTA: Read next: Agentic Time Horizons Explained: Why AI agents still “tap out” early